Finally Hyper-G (cf. 2.1.15). This project starts also in 1989 at Graz University of Technology. Hermann Maurer and his team aim for a kind of networked version of Microcosm. Many of the flaws of the Web are identified and addressed. But when Hyper-G was presented in 1995 it was too late to get momentum for the new product. The Web was more exploding than growing and left no chance for Hyper-G. At least it will become interesting to contrast the World Wide Web with the competing approach of Hyper-G, respectively with HyperWave as the commercial version is named.

2.1.15 Hyper-G/HyperWave

in Vision and Reality of Hypertext and Graphical User Interfaces

According to Hermann Maurer, Frank Kappe, and Keith Andrews, the World Wide Web belongs together with WAIS and Gopher to the first generation of information systems on the Internet. The research team around Maurer argues, that Hyper-G has qualities that classify it for a second generation system. In The Hyper-G Network Information System [Andrews/Kappe/Maurer 95] they uncover the top six shortcomings of the Web. Hyper-G will be presented on the basis of these points.

First on hyperlinking [Ibid., p. 2]: * Actually “W3” is used here. I have changed it to “the Web” for better readability and because of consistency.

[The Web]* does not provide any information structuring facilities beyond hyperlinks; its links are one-way (there is no way of determining which other documents refer to a particular document, leading to inconsistencies when documents are moved or deleted – the frequent “dangling links”) and embedded within text documents (there are no links from other kinds of documents).

Hyperlinks in HTML are absolute addresses to pages on other Web servers. There is no automatism to update these links if the destination page changes in name or location. This leads to “broken links” and annoying “HTTP error 404 – file not found” messages. Hyper-G automatically maintains the consistency of links. Therefore Hyper-G servers exchange linking information between sites. Much like Microcosm a database is used on each Hyper-G server to store linking data separate from the documents.

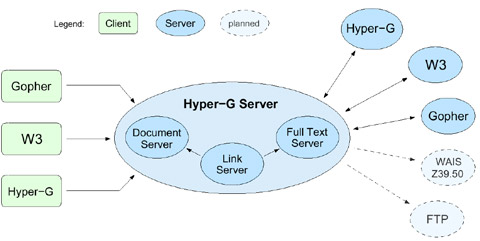

Fig 2.13 The architecture of Hyper-G. Web- and the Hyper-G clients have Internet access to a Hyper-G server. The server itself exchanges information with other Hyper-G servers. In addition it can also receive data from Web servers and other resources.

On searching [Ibid.]:

Like Gopher, [the Web] has no native search facilities, but relies on external search engines such as Wais, leading to patchy server-by-server provision of search facilities by individual sites and no real-time cross-server searches (searches in previously generated cross-server indices are available).

Google and Yahoo have been mentioned above. But services like these can neither cope with millions of Web pages nor guarantee that they are all-embracing for a specific server. The user is left in uncertainty whether a negative result to a search means that there are really no pages that match the text query. Hyper-G in contrast has sophisticated search capabilities built in. The Hyper-G protocol allows to initiate searching processes on several Hyper-G servers simultaneously. The results can be presented in best suited layout depending on the preferences of the user. This leads to consistency in the user interface no matter where the search is performed.

On dynamic content [Ibid.]:

The flexibility provided by cgi is achieved at great cost: the uniformity of the interface disappears, different [Web] servers behave differently – resulting in the “Balkanisation” (to quote Ted Nelson) of the Web into independent “W3 Empires”.

The syntax for URIs allows to specify queries instead of just fixed pages. The ‘?’-example has shown how extra information can be transmitted to the server. The protocol used here is the Common Gateway Interface (CGI). A CGI script can instruct the Web server to start an arbitrary application program to calculate something, for example to connect to an external database to retrieve some data. Finally the results are presented on an HTML page.

This point is related to the next on large structures [Ibid.]:

Also, there is little support for the maintenance of large datasets, so it is not uncommon to see several [Web] servers within a single organisation, each a fundamentally separate interactive context.

Hyper-G provides an architecture that allows to define structure independent form the distribution of the files among the servers. Files can belong to collections, which may themselves be part of other collections. Each file is member of at least one collection, but it can be associated with several others at the same time. A special kind of collection is a cluster. It is used to group a set of files as a logical unit. It can be used e.g. to refer to a document and its translated versions as one entity or to form multimedia aggregates like movies with an associated text transcription file. Collections are well integrated into the data model. This means especially that they can also be the target object for hyperlinks in Hyper-G.

Barry Fenn and Hermann Maurer add tours as another form of aggregation. A tour is «a scripted sequence of links, activated between a series of documents or clusters, which the user may just sit back and watch like a television program» (in Harmony… on an Expanding Net [Fenn/Maurer 94, p. 35]). The user can stop at any time and continue to explore the content on her own behalf. Tours evolve to a specialization of collections and are called sequences then [Maurer et al. 98, p. 284]. They are collections with a sort order imposed on its members. Navigation through sequences can use the order of items to offer commands like NEXT, PREVIOUS, FIRST and LAST. Note that sequences are orthogonal to link structures. They also match Memex’ notion of trails much better than the concept of hyperlinking does.

On authoring [Andrews/Kappe/Maurer 95, p. 2]:

The Web today is very much “read-only”, in the sense that information providers prepare data sets in which information consumers can generally only browse.

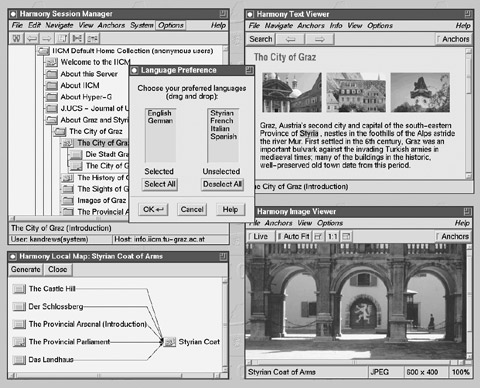

Fig 2.14 Harmony, the Hyper-G browser and editor for X Windows. The Session Manger (top left) shows the hierarchy of collections. The Text Viewer (top right) uses an SGML parser to display HTML or HTF documents. Images can be displayed inline or separate with the Image Viewer (bottom right). The Local Map (bottom left) shows on request the incoming and outgoing links for a given document.

The client application program for Hyper-G is Harmony. Fig. 2.14 shows the Session Manager, the Text Viewer, the Image Viewer and the Local Map window. Hyper-G has its own native markup format for hypertext files called htf. It is, like HTML, an application of SGML, and the Text Viewer can display both formats. Moreover audio files, mpeg movies, 3D scenes, and PostScript files are directly supported by Harmony.

Unlike the dominating Web browsers Microsoft Internet Explorer and Netscape Communicator the Hyper-G client Harmony is also an authoring tool. Links can be created and followed for all documents of file formats just mentioned. Images, movies and 3D scenes can contain links in Hyper-G.

Instead of HTTP the Harmony Document Viewer Protocol (DVP) is used between Hyper-G servers and clients. This is necessary to provide the power for various browsing, editing, and link functions.

On scalability [Ibid.]:

Finally, although its url mechanism endows [the Web] with scalability in terms of number of servers, it is not scalable in terms of number of users. Extremely popular [Web] servers […] can often become overwhelmed by tens of thousands of users, necessitating their physical mirroring to many alternative sites.

Replication mechanisms and caching is built into the architecture of Hyper-G. The servers can adapt to variable user traffic and are capable to reroute the load to less used servers.

à propos

11.5.2009: Hermann Maurer spricht in Elmshorn: Der große Bruder wird nun wirklich ernst: Google und mehrIncoming Links

Slashdot discussion: UN Bigwig: The Web Should Have Been Patented and Licensed

![]() For a free PDF version of Vision and Reality of Hypertext and Graphical User Interfaces (122 pages), send an e-mail to:

For a free PDF version of Vision and Reality of Hypertext and Graphical User Interfaces (122 pages), send an e-mail to:

![]() mprove@acm.org I’ll usually respond within a day. [privacy policy]

mprove@acm.org I’ll usually respond within a day. [privacy policy]